The hidden logics of search

The black box society

The concepts which were discussed in the hidden logics of search were; whitelisting, search transparency, personalised advertising, search as a ‘leveller’ and auto correct functions on search.

The article describes search as a leveller- something that treats or affects all people the same way. Search gives anyone with computer or a nearby library access to resources that were once out of reach. It also has the power to give each of us our own individual world, tailored to our personal interests and preferences by tracking our activity online. In an attempt to justify this collection of personal data (that some may deem an infringement), large corporations such as Google merely play it down to suggest a better user experience will be gained.

For most of us the easiest way to seek information we desire is to type into Google or other search engines. Search engines are revolutionary; they have become a social leveller by allowing free content to everyone. In this way search engines have gained trust from consumers via this convenient, free and unlimited access to information. Users then rely on the convenience of these tech giants and combined with our inertia, often determine what subjects reach our awareness. Search has made cultural, economical and political inroads; the case of Santorum and Savage highlights how search can easily be manipulate.

The Hidden Logics of Search examines if this trust has been abused by the tech giants, thus leaving us to question the legitimacy of consumer trust; whether it is wholly present, expected, or whether there is a lack of knowledge on their methods. A key example is that we trust searches to give us the most relevant webpages by sorting them automatically- so users don’t have to. Companies such as Foundem experienced difficulties with the issue of relevance within search results (whitelisted) because the algorithms used by Google tarnished the page as spam. Google is known to be a multi-platform online giant and thus it could be argued that they once again abuse user trust by using their power to covertly eliminate competition- a decline in Google traffic could be seen as devastating to the future advancement of the company. This kind of online tyranny was not overtly apparent to the public in 2009.

It can be questioned whether there is more political motivation behind these online power-houses than the average man would suspect. When Occupy Wall Street began to gain mass media attention in 2011, it seemed that the global networking and social media giant Twitter omitted to set the subject as ‘trending’. When questioned, a spokesperson for Twitter’s Communications stated that this was solely due to coding algorithms- the velocity of ‘tweets’ at a certain time, rather than the popularity of the subject itself. Henceforth, online platforms feed us information that we regard to be trustworthy, but in reality the transparency is questionable; it can be argued that there are political, economical and social motives behind everything we see and do not see online.

‘’ Better user experience is the reason major internet companies give for almost everything they do’’. Auto-completes was invented by Google to make searches easier- the user no longer has to type their topic in full, algorithms are now used to predict user searches. Algorithms usually reflect the search activity of users combined with the content of web pages indexed by Google. Bettina Wulff is an example negating the fact that ‘’better user experience’’ is not always justifiable. Whenever Wullf’s name was typed into Google’s search engine; ‘’Bettina Wulff prostituierte’’ and ‘’Bettina Wulff escort’’ were seen in autocompletes, leading Wulff to fear a judgement would be made of her character rather than the combining of information and facts on her legal battles against slander. In rebuttal, Google claimed innocence; stating that it is the users obligation to know the validity of web pages and what they read. In a world where users trust Google to correct their spelling and finish their searches for them, it seems unclear as to why the major internet company would only cached negative words to Wulff’s name, instead of the entire factual truths behind her story. Some would argue that this could be a tactic to drive online traffic through Google pages, leaving us to question whether user experience is to the fore at all. If Google are willing to cross boundaries for the aforesaid then one must question whether they are always trying to educate us and give us the right answers or whether they are feeding us what they want us to see. This brings forward a question of social morality- if a person is being defamed wrongly online due to their ‘’better user experience’’ algorithms, do Google have a moral obligation to feed us the truth De Facto, or do they feed us De Jure to ensure the approval and loyalty of their shareholders.

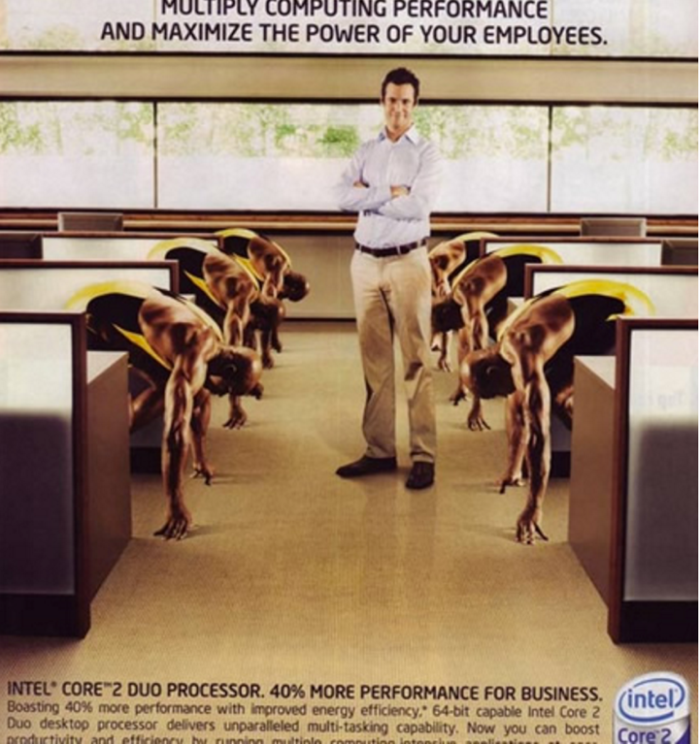

2007 intel core 2 duo processor ad

2007 intel core 2 duo processor ad